论文精读之MapReduce

本文最后更新于:2 年前

这里主要记录一些兔兔子刚刚碰到学习的论文内容,可能对于论文的理解还不够,请多多指教嗷!!!估摸着6.824和6.828的论文,都会在这里做一个小的记录哦!!!

MapReduce

Abstract

What is MapReduce?

- A programming model that process and generate large data sets.

How to use it?

- Users specify a map function (process key/value pair) to generate intermediate key/value pairs

- Users specify a reduce function that merges all intermediate values associated with the same intermediate key.

What’s the meaning of MapReduce?

Programs are automatically parallelized and executed on a large cluster of commodity machines.

Runtime system’s job:

partition the input data

schedule the program’s execution across a set of machines

handle machine failures

manage the required inter-machine communication

Result : Allow programmers use the distributed systems easily.

1. Introduction

- The primary mechanism for fault tolerance is MapReduce is re-execution.

- Contributions of this work : A simple and powerful interface that enables automatic parallelization and distribution of large-scale computations.(High performance on large clusters of commodity PCs)

2. Programming Model

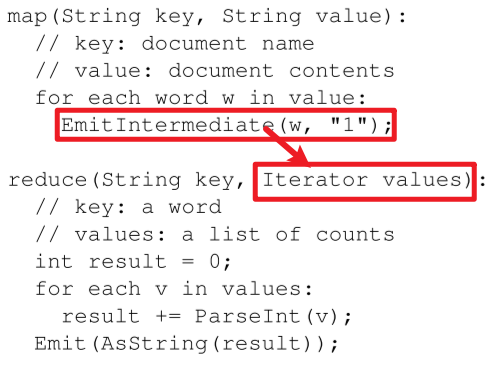

- Two functions:

- Map:

- written by the user

- Map function take an input pair and produce a set of intermediate key/value pairs.

- MapReduce library groups together all intermediate values with the same intermediate key $I$ and passes them to Reduce function

- Reduce:

- also written by the user

- Reduce accept the an intermediate key $I$ and a set of value for that key.

- It merges these values to form a possibly smaller set of values. Typically just zero or one output value is produced per Reduce function invocation.

- Intermediate values are supplied to the reduce function via an iterator. It allows us to handle lists of values that are too large to fit in memory.

- Map:

2.1 Example

- Count words in a large collection of documents.

- The MapReduce function will link user’s code together with the MapReduce library.

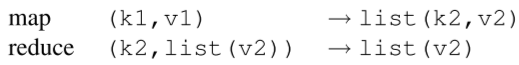

2.2 Types

- The conceptually functions supplied have the associated types:

Map负责将每一对key/value,生成中间态的k2和对应的v2,交由集群中的机器来进行运算。Reduce则根据中间的k2,收集到每一台机器上的运算结果所构成的list(v2),然后Reduce函数经过对应的逻辑处理(例如求和之后),最终输出结果。

2.3 More Examples

Distributed Grep : Map function emits a line if matches a supplied pattern. Reduce helps copy the supplied intermediate data to the output.

Count of URL Access Frequency : Map processes logs of web page requests and outputs <URL,1>. Reduce adds all the values of the same URL and emits a <URL, total count> pair.

Reverse Web-Link Graph : Map -> <target , source> . Reduce -> <target , list(source)>

Term-Vector per Host : A term vector summarizes the most important words that occur in a document or a set of documents as a list of <word , frequency> pairs. Map -> <hostname , term vector> . Reduce -> pass all per-document term vectors for a given host , add these term vectors together , throwing away infrequent terms , and then emits a final <hostname , term vector> pair.

Inverted Index:

- The map function parses each document , and emits a sequence of <word , document ID> pairs.

- The reduce function accepts all pairs for a given word , sorts the corresponding document IDs and emits a <word , list(document ID)> pair.

- Result : The set of all output pairs forms a simple inverted index. It is easy to augment this computation to keep track of word positions.

这里的Inverted Index就是我们常说的倒排索引,这里Map - Reduce帮助我们整理出了每个单词对应的文档,并且由出现频率排好了序,能够帮助我们加快计算来锁定某个单词出现的位置。

- Distributed Sort: Map -> Extracts the key from each record and emits a <key , record> pair. Reduce -> Emits all pairs unchanged. This computation depends on the partitioning facilities.

3. Implementation

This section describes an implementation targeted to the computing environment in wide use at Google : Large clusters of commodity PCs together with switched Ethernet

Environment:

Machines —> dual-processor x86 processors running Linux , 2-4 GB(两核四G,跑Linux)

Commodity networking hardware —> 100Mb/s or 1Gb/s , averaging considerably less in overall bisection bandwidth. (看起来很快,单平均要低得多)

A cluster -> hundreds or thousands of machines , failures are common.(机器很多,故障频繁)

Storage -> inexpensive IDE disks directly attached to individual machines. A distributed file system developed in-house is used to manage the data stored on these disks. FS uses replication to provide availability and reliability on top of unreliable hardware.(每台机器采用廉价磁盘,磁盘上装了内部研发的分布式文件系统(这里可能指的是GFS),这种系统使用赋值来提供可靠性和可用性)

A scheduling system -> users submit jobs to a scheduling system. Each job contains a set of tasks, and is mapped by the scheduler to a set of available machines within a cluster. (调度系统,用户提交他们的作业给调度系统,用户提交的作业包含了一组任务。同时这些作业由调度器来Map映射到集群中一组可用的计算机上来执行)

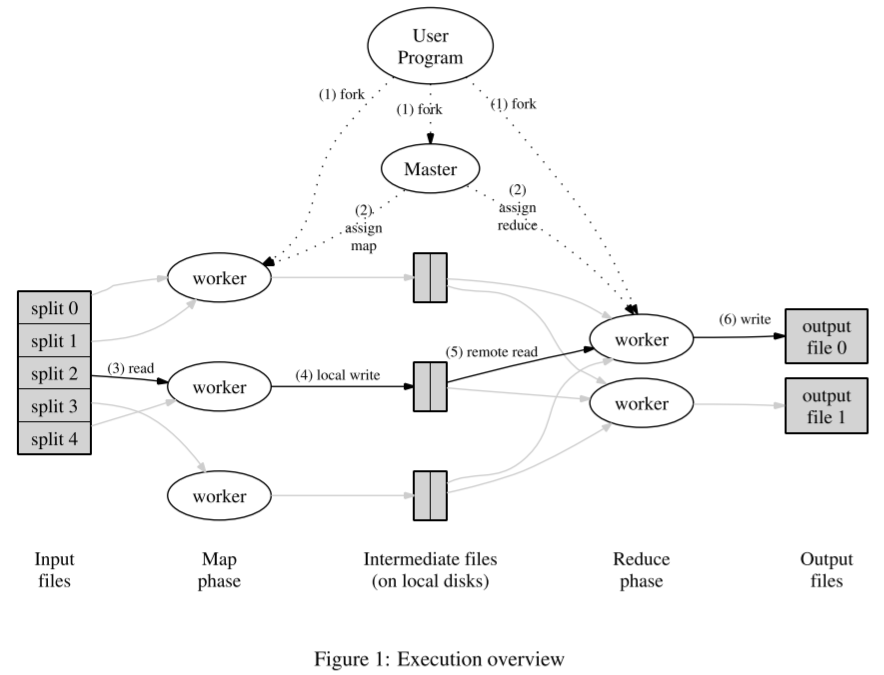

3.1 Execution Overview

- Execution overview:

- Map函数分布在不同的机器上并且自动调用,将输入数据进行分区(partition),拆分为一系列分片(a set of M splits)。拆分后的分片可以在不同的机器上被并行执行。(Map(本身分布式分布在不同的计算机上))

- Reduce函数被分布式调用,调用来使用分片函数(例如:$hash(key)\ mod\ R$,被分片的参数R是被用户所指定的。(相当于把中间的结果分别收集,输出在不同的output files中,因此要指定R嗷!!!)

The picture overall flow of MapReduce operation in our implementation. When user program calls the MapReduce function , the following sequence of actions occurs:

- MapReduce library —> split input files into M pieces typically 16 megabytes to 64 megabytes(MB) per piece (This is controllable by the user via an optional parameter). It then starts up many copies of the program on a cluster of machines.

- The master is special , the rest are workers that are assigned work by the master. M map tasks and R reduce tasks to assign. Master picks idle workers and assigns each one a map task or a reduce task.(Master将对应的Map或者Reduce任务分配给不同的机器)

- Worker who is assigned a map task reads the contents of the corresponding input split. Parsing key/value pairs out of input data and passes each pair to the user-defined Map function. Intermediate key/value pairs produced by the Map function are buffer in memory(Worker会将分片的数据读入,通过分配的Map函数,将数据处理生成中间数据并缓存在buffer中)

- Periodically , the buffered pairs are written to local disk, partitioned into R regions by the partitioning function. Locations on the local disk are passed back to the master, who is responsible for forwarding these locations to the reduce workers.(每过一段时间,buffer中的pair对就会被分区函数分成R个部分并且存入machine’s local disk。这个存入的地址会被返回给Master,由Master交给对应的Reduce workers)

- When a reduce worker is notified by the master about these locations,it uses remote procedure calls to read the buffered data from the local disks of the map workers. When a reduce worker has read all intermediate data , it sorts it by the intermediate keys so that all occurrences of the same key are grouped together. The sorting is needed because typically many different keys map to the same reduce task. If the amount of the intermediate data is too large to fit in the memory , an external sort is used.(Reduce对应的Worker得到了这些地址,就会Remote call来读取这些缓存的数据。读取完所有中间数据后,会进行排序,使得相同的key聚在一起。排序是重要的因为经常来说,会有不同的keys来自于不同机器聚合在相同的reduce任务上。如果中间数据太大的话,还会用到外排序。)

- Reduce worker iterates over the sorted data and for each unique intermediate key encountered, it passes the key and the corresponding set of intermediate values to the user’s Reduce function. Output is appended to a final output file for this reduce partition.(Reduce worker会遍历所有排好序的数据,对于每一个独特的中间key,都会将key和它对应的a set of data交给Reduce function。对于这个reduce分片的结果,会被appended到一个最终的输出文件中)

- When all map tasks and reduce tasks have been completed, the master wakes up the user program. At this point, the MapReduce call in the user program returns back to the user code.(结束啦!唤醒用户程序,返回用户代码)

Successful impletion —> output of the MapReduce execution is available in R output files(With the name as specified by the user). Typically, users do not need to combine these R output files into one file - they often pass these files as input to another MapReduce call, or use them from another distributed application that is able to deal with input that is partitioned into multiple files.(输出的R个文件往往在套娃orz!!!)

3.2 Master Data Structures

- Master keeps several data structures. For each map task and reduce task, it stores the state(idle , in-progress or completed), and the identity of the worker machine(for non-idle tasks)(存储每个任务的消息,例如完成没有,空闲,运行完成。同时还存储非空闲的任务结点的ID)

- The master is the conduit through which the location of intermediate file regions is propagated from map tasks to reduce tasks.(Master是中间人(或者说管道),通过这条管道,file regions的位置被从map任务传播给了reduce任务)Therefore , for each completed map task , the master stores the locations and sizes of the R intermediate file regions produced by the map task.(注意,和上面的图一样的,Map任务在结束的时候,就已经把处理后的数据分区为R份储存了嗷!!!)Updates to this location and size information are received as map tasks are completed(任务完成后,主节点就会收到对应的更新结果。)The information is pushed incrementally to workers that have in-progress reduce tasks. (更新信息会被增量推送给正在进行的 reduce 任务的机器。)

3.3 Fault Tolerance

- MR library is designed to help process very large amount of data using hundreds or thousands of machines, the library must tolerate machine failures gracefully.

Worker Failure

- Para1:

- M pings every W periodically. No response for a certain amount of time -> M marks the W as failed.

- Any map tasks completed by the worker are reset back to their initial idle state. -> They are eligible for scheduling on other workers.

- Similarly, any map task or reduce task in process on a failed worker is also reset to idle and becomes eligible for rescheduling

- Para2:

- Completed map tasks are re-executed on a failure because their output is stored on the local disk(s) of the failed machine and is therefore inaccessible.(完整的map tasks会被再次执行如果出错(比如上面说的,对应的机器down掉了),因为输出被存储在本地磁盘上,而机器挂掉了,因此是这些信息作废,不可以被获取到orz)

- Completed reduce tasks do not need to be re-executed on a failure since their output is stored in a global system.(完整的Reduce如果遇到机器故障则不需要完全重新执行,因为执行结果是放在全局系统中,之前处理过的数据依旧是存在并且可以访问的嗷!!!)

- Para3:

- When a map task is executed first by worker A and later executed by worker B(since A failed), all workers executing reduce tasks are notified of the re-execution. Any reduce tasks that has not already read the data from A will read the data from worker B.(注意,这种re-execution的转变,将使得全部reduce tasks都注意到。还没有读取过A的数据的reduce tasks,将从B开始读取。)

- MR is resilient on large-scale worker failures. e.g during one MR operation , network maintenance on a running cluster was causing groups of 80 machines at a time to become unreachable for several minutes. The MR master simply re-executed the work done by the unreachable worker machines , and continued to make forward progress, eventually completing the Map-Reduce operation.

Master Failure

- It is easy to make the master write periodic checkpoints of the master data structures described above. If the master task dies, a new copy can be started from the last checkpointed state. However, given that there is only a single master, its failure is unlikely; therefore our current implementation aborts the MR computation if the master dies. Clients can check for this condition and retry the MR operation if they desire.(一台主机,如果挂了的话,gg,直接放弃运行MR,可以重试嗷!!!)

Semantics in the Presence of Failures

Para1

- 分布式执行结果和顺序执行的结果应该是一样的

Para2

- 采用原子提交来保证这个属性,Each task writes its output to private temporary files. A Reduce task produces one such file, and a map task produces R such files(one per reduce task).

- Map结束,给master发的消息会包括这R个零时文件的名字。如果收到了一个已经完成的map发送的消息,master会将其忽略。Otherwise, master records the names of R files in a master data structure.

Para3

- Reduce结束,会将临时文件的名字自动地重命名为最终输出文件的名字。如果同样的reduce task被执行在多态机器上,多次rename的调用将会被作用到同一个输出最终输出文件上。

- 我们依赖于这种底层文件系统(例如HDFS)所提供的原子性命名机制来保证,最终的文件系统的状态是只包含一次reduce task的执行所产生的数据。

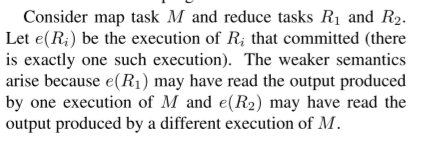

Para4

- Vast amount of our map and reduce operations are deterministic, and the fact that our semantic are equivalent to a sequential execution in this case makes it very easy for programmers to reason about their program’s behaviors.(说白了就是大多数的MR,用户都是可以推断程序执行的结果的嗷!!!)

- When M and/or R are non-deterministic, we provide weaker but still reasonable semantics. To non-deterministic operators, output of a particular reduce task R is equivalent to the output for R produced by a sequential execution of the non-deterministic program.(如果我们提供的函数不是决定性的函数,那结果和顺序执行的结果就会变弱,和非决定性的函数执行的结果是一致的)

- However , output for a different reduce task R2 may be the same as R2 produced by a different sequential execution of the non-deterministic program.

Para5

- 上面是一个小例子:

- M -> R1 / M -> R2

- R1可能读到自己或者别的M产生的结果,R2也是嗷!!!

3.4 Locality

- Network bandwidth是稀缺资源,我们通过利用被存储在机器的本地磁盘上的输入数据(由GFS管理)构成我们的集群来保存网络带宽。

- GFS将每个文件分成64MB的块,并且对于每一块存储在多台不同机器上存储多个备份(通常为3个备份)。MR master takes the location information of the input files into account and attempts to schedule a map task on a machine that contains a replica of the corresponding input data.

- Failing that, it attempts to schedule a map task near a replica of the task’s input data.( On a worker machine containing the data). When running large MR operations on a significant fraction of the workers in a cluster, most input data is read locally and consumes no network bandwidth.(用户输入的数据在哪台机器上,就最好用它来跑。这样可以节约很多带宽出来嗷!!!)

3.5 Task Granularity

- Para1:

- subdivide the map phase into M pieces , reduce phase into R pieces. M , R should be much larger than the number of machines.

- Having each worker perform many different tasks improves dynamic load balancing , and also speeds up recovery when a worker fails(当很多台worker执行很多任务时,第一:有利于负载均衡,第二:加快故障恢复): the many map tasks it has completed can be spread out across all the other worker machines.

- Para2:

- Practical bounds limit M and R can be in our implementation.

- Master must make $O(M+R)$ scheduling decisions and keeps $O(M*R)$ state in memory.

- (The constant factors for memory usage are small however: the O(M∗R) piece of the state consists of approximately one byte of

data per map task/reduce task pair.)

- Para3:

- 这一部分是关于实践相关的内容。

- R is often constrained by users because the output of each reduce task ends up in a separate out-

put file - In practice, we tend to choose M so that each individual task is roughly 16 MB to 64 MB of input data (so that the locality optimization described above is most effective), and we make R a small multiple of the number of worker machines we expect to use. We often perform MapReduce computations with M = 200, 000 and R =5, 000, using 2,000 worker machines.

3.6 Backup Tasks

- One of the common causes that lengthens the total time taken for a MapReduce operation is a “straggler”: a machine that takes an unusually long time to complete one of the last few map or reduce tasks in the computation.(有机器掉队了嗷!!!)

- Many reasons:

- bad disk -> experience many correctable errors slow down its read performance from 30 MB/s to 1 MB/s.

- many jobs -> Cluster scheduling system may have scheduled other tasks on the machine, causing it to execute the MapReduce code more slowly due to competition for CPU, memory, local disk, or net-

work bandwidth. - caches disabled -> A recent problem we experienced was a bug in machine initialization code that caused processor caches to be disabled: computations on affected machines slowed down by over a factor of one hundred

- How to solve it?

- We have a general mechanism to alleviate the problem of stragglers (English !!!)

- When a MapReduce operation is close to completion, the master schedules backup executions of the remaining in-progress tasks. The task is marked as completed whenever either the primary or the backup execution completes(master会备份执行剩余的任务,任务不管是主执行还是备份执行,最后都会被标记为completed)

- We have tuned this mechanism so that it typically increases the computational resources used by the operation by no more than a few percent(我们调整了此机制,使其通常将操作使用的计算资源增加不超过百分之几)

- result: We have found that this significantly reduces the time to complete large MapReduce operations. As an example, the sort program described in Section 5.3 takes 44% longer to complete when the backup task mechanism is disabled.(这种方式可以提高很多效率嗷!!!)

- 总的来说,就是我们采取了一种机制,当任务即将结束,那么最后跑的那些程序,就是最有可能机器有问题的程序。我们就启动另外的一些资源来跑这些任务,这些能够更快得到结果嗷!!!

4. Refinements

- Although the basic functionality provided by simply writing Map and Reduce functions is sufficient for most needs, we have found a few extensions useful. There are described in this section.

- 这里是在前面的基础上,对于MR模型的改进和改良嗷!!!

4.1 Partitioning Function

- User specify files that they desire(R). Default function is $hash(key)\ mod\ R$ . This tends to result in fairly well-balanced partitions.

- In some cases, it’s more useful to partition data by some other functions of the key.

- e.g. Sometimes the output keys are URLs, and we want all entries for a single host to end up in the same output. To support this decision, user of MR library can provide special partitioning function. e.g. using $hash(Hostname(urlkey))\ mode\ R$ as the partitioning function causes all URLs from the same host to end up in the same output file.

4.2 Ordering Guarentees

- Guarantee within a given partition, the intermediate key/value pairs are processed in increasing key order.

- This ordering guarantee makes it easy to generate a sorted output file per partition, which is useful when the output file format needs to support efficient random access lookups by key, or users of the output find it convenient to have the data sorted.

4.3 Combiner Function

- Para1

- In some cases, there is significant repetition in the intermediate keys produced by each map task, and the user-specified Reduce function is commutative and associative

- e.g. Word Count -> each map producing hundreds or thousands of records of the form <the, 1>. All these counts will be sent over the network to a single reduce task and then added together by the R function to produce one number.

- How to solve it !!! We allow the user to specify an optional Combiner function that does partial merging of

this data before it is sent over the network.

- Para2

- The combiner function is executed on each machine that performs a map task.

- Typically -> same code on combiner and reduce function.

- Difference on combiner and R function -> how MR library handles the output of the function. (Output of a reduce function is written to the final output file . Output of a combiner function is written to an intermediate file that will be sent to a reduce task.)(说白了就是帮Reduce在Map之后就干一点活再存入中间文件中嗷!!!)

- Partial combining significantly speeds up certain classes of MapReduce operations

4.4 Input and Output Types

- Para1

- MR library supports for reading input data in several different format. e.g. “text” mode -> treat each line as a key/value pair: key -> offset in the line, value -> contents of the line.

- Another common supported format stores a sequence of key/value pairs sorted by key.

- Each input type implementation knows how to split itself into meaningful ranges for processing as separate map tasks (e.g. text mode’s range splitting ensures that range splits occur only at line boundaries)

- Users can add support for a new input type by providing an implementation of a simple reader interface, though most users just use one of a small number of predefined input types.

- Para2

- A reader doesn’t necessarily need to provide data read from a file. e.g. It’s easy to define a reader that reads records from a database, or from data structures mapped in memory.

- Para3

- In a similar fashion, we support a set of output types for producing data in different formats and it is easy for user code to add support for new output types.

- summary:

- 输入输出的Type对应的Reader可以由我们来定义(用于Map函数以我们定义的方式来切割数据),Map和Reduce都可以由我们自己定义,而且系统定义好的有很多供我们使用嗷!!!

- 不一定是要从文件中,从数据库中读,从内存的数据结构中读都可以嗷!!!

4.5 Side-effects

- In some cases, users of MapReduce have found it convenient to produce auxiliary files as additional outputs from their map and/or reduce operators. We rely on the application writer to make such side-effects atomic and idempotent. Typically the application writes to a temporary file and atomically renames this file once it has been fully generated.

- We do not provide support for atomic two-phase commits of multiple output files produced by a single task. Therefore, tasks that produce multiple output files with cross-file consistency requirements should be deterministic. This restriction has never been an issue in practice。

- 副作用就是中间文件嗷!!!但是通过中间文件,不适用两阶段提交协议一样能够保证原子性嗷!!!

4.6 Skipping Bad Records

- Para1

- Sometimes there are bugs in user code that cause the Map or Reduce functions to crash deterministically on certain records. Such bugs prevent a MapReduce operation from

completing. - The usual course of action is to fix the bug, but sometimes this is not feasible; perhaps the bug is in a third-party library for which source code is unavailable.

- Sometimes it is acceptable to ignore a few records, for example when doing statistical analysis on a large data set. We provide an optional mode of execution where the MapReduce library detects which records cause deterministic crashes and skips these records in order to make forward progress

- 总结来说,代码出错会导致崩溃,但是有些代码又来自于lib,改不了,可以选择适当放弃一些会导致代码出错的records嗷!!!

- Sometimes there are bugs in user code that cause the Map or Reduce functions to crash deterministically on certain records. Such bugs prevent a MapReduce operation from

- Para2

- Each worker process installs a signal handler that catches segmentation violations and bus errors. Before invoking a user Map or Reduce operation, the MapReduce library stores the sequence number of the argument in a global variable.

- If the user code generates a signal, the signal handler sends a “last gasp” UDP packet that contains the sequence number to the MapReduce master. When the master has seen more than one failure on a particular record, it indicates that the record should be skipped when it issues the next re-execution of the corresponding Map or Reduce task.

- 说白了,就是在多个Worker遇见同一个处理不了的记录的时候,都会向master发送 “last gasp” 的UDP包,包中装了对应的序列号。当master发现在某个特定的记录上,错误多次发生的时候,就会指示这个记录应该被跳过。(当出了问题之后,当前的task就停止执行了,master会指定它再另外一台机器上执行,如果多次收到worker汇报说:执行到某一个位置(和上一台挂掉的位置相同),我挂了orz。这个时候当master下一次指定另外一台机器执行这个任务的时候,就会告诉它跳过这个record)

4.7 Local Execution

- Debugging problems in Map or Reduce functions can be tricky, since the actual computation happens in a distributed system, often on several thousand machines, with work assignment decisions made dynamically by the master. (由于分布式,debug很困难)

- To help facilitate debugging, profiling, and small-scale testing, we have developed an alternative implementation of the MapReduce library that sequentially executes all of the work for a MapReduce operation on the local machine. (调试”功能中的问题可能比较棘手,因为实际计算发生在分布式系统中,通常在几千台机器上,工作分配决策由主动态做出。为了帮助促进调试、分析和小规模测试,我们开发了 MapReduce 库的替代实现,该库将按顺序执行本地机器上的 MapReduce 操作的所有工作。)

- Controls are provided to the user so that the computation can be limited to particular map tasks. Users invoke their program with a special flag and can then easily use any debugging or testing tools they find useful (e.g. gdb).(说白了,这个就是调试和测试工具的使用嗷!!!特定的map tasks,可以使用任何debug和test的工具嗷!!!)

4.8 Status Information

- The master runs an internal HTTP server and exports a set of status pages for human consumption. The status pages show the progress of the computation, such as how many tasks have been completed, how many are in progress, bytes of input, bytes of intermediate data, bytes of output, processing rates, etc. The pages also contain links to the standard error and standard output files generated by each task. (master提供了一个内部的Http服务器并且输出一组状态页面供我们使用,显示很多信息啊,错误啊,结果啊,速率啊之类的。)

- The user can use this data to predict how long the computation will take, and whether or not more resources should be added to the computation. These pages can also be used to figure out when the computation is much slower than expected.(可以用来预测啊,动态调整啊之类的,也可以用于发现问题。)

- In addition, the top-level status page shows which workers have failed, and which map and reduce tasks they were processing when they failed. This information is useful when attempting to diagnose bugs in the user code.(顶级的状态页面展示了哪些worker挂了,哪些Map和Reduce当Worker挂了的时候在运行。这对于我们尝试去诊断错误是非常有用的嗷!!!)

4.9 Counters

- Para1:

- MR library provides a counter facility to count occurrences of various events. e.g. user code may want to count total number of words processed or the number of German documents indexed, etc.

- 提供计数器嗷!

- Para2:

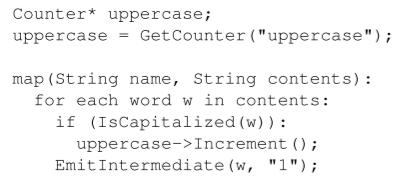

- To use this facility, user code creates a named counter object and the increments the counter appropriately in the map and/or reduce function.

Example:

- Para3:

- The counter values from individual worker machines are periodically propagated to the master (piggybacked on the ping response). The master aggregates the counter values from successful map and reduce tasks and returns them to the user code when the MapReduce operation is completed.(每次Ping中返回master对应的counter的数据)

- The current counter values are also displayed on the master status page so that a human can watch the progress of the live computation. When aggregating counter values, the master eliminates the effects of duplicate executions of the same map or reduce task to avoid double counting.(Status will show the live computation of the counter. When aggregating, master will avoid double counting.)

- Duplicate executions can arise from our use of backup tasks and from re-execution of

tasks due to failures.

- Para4:

- Some counter values are automatically maintained by the MapReduce library, such as the number of input key/value pairs processed and the number of output key/value pairs produced.(MR会自动保存一些counter的值)

- Para5:

- Users have found the counter facility useful for sanity checking the behavior of MapReduce operations.

- For example, in some MapReduce operations, the user code may want to ensure that the number of output pairs produced exactly equals the number of input pairs processed, or that the fraction of German documents processed is within some tolerable fraction of the total number of documents processed.(例如:输出和输出的pair数量是一样的,或者执行German的文档所占的比例在总数的可忍受比例内)

5. Performance

- Measure the performance of MR on two computations on a large cluster of machines.

- One -> search through one terabyte of data looking for a particular pattern.

- Two -> Sort approximately one terabyte of data

5.1 Cluster Configuration

- A cluster consisted of approximately 1800 machines.

- Each machine ->

- 2GHz Intel Xeon processors with Hyper-Threading enabled

- 4GB memory

- two 160GB IDEdisks

- a gigabit Ethernet link

- Machines are arranged in a two-level tree-shaped switched network with 100-200 Gbps of aggregate bandwidth available at the root.

- All of the machines were in the same hosting facility and therefore the round-trip time any pair of machines was less than a millisecond.

- Out of the 4GB of memory, approximately 1-1.5GB was reserved by other tasks running on the cluster. The programs were executed on a weekend afternoon, when the CPUs, disks, and network were mostly idle.

5.2 Grep

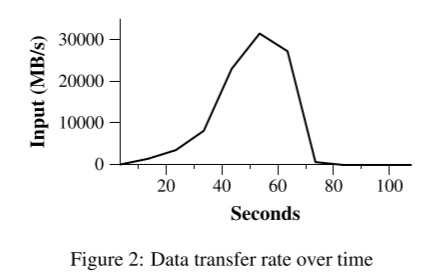

- The grep program scans through 1010 100-byte records, searching for a relatively rare three-character pattern (the pattern occurs in 92,337 records). The input is split into approximately 64MB pieces (M = 15000), and the entire output is placed in one file (R =1). (grep 程序扫描 1010 100 字形记录,搜索相对罕见的三字符模式(该模式发生在 92,337 条记录中)。输入被分割成大约 64MB 块(M = 15000),整个输出被放置在一个文件中。)

- Figure 2 shows the progress of the computation over time. The Y-axis shows the rate at which the input data is scanned. The rate gradually picks up as more machines are assigned to this MapReduce computation, and peaks at over 30 GB/s when 1764 workers have been assigned. As the map tasks finish, the rate starts dropping and hits zero about 80 seconds into the computation. The entire computation takes approximately 150 seconds from start to finish.

- This includes about a minute of startup overhead. The overhead is due to the propagation of the program to all worker machines, and delays interacting with GFS to open the set of 1000 input files and to get the information needed for the locality optimization.

5.3 Sort

The sort program sorts 1010 100-byte records (approximately 1 terabyte of data). This program is modeled after the TeraSort benchmark.

Para1

- The sorting program consists of less than 50 lines of user code. A three-line Map function extracts a 10-byte sorting key from a text line and emits the key and the original text line as the intermediate key/value pair.

- We used a built-in Identity function as the Reduce operator. This functions passes the intermediate key/value pair unchanged as the output key/value pair. The final sorted output is written to a set of 2-way replicated GFS files (i.e. 2 terabytes are written as the output of the program).

Para2

- As before, the input data is split into 64MB pieces (M = 15000). We partition the sorted output into 4000 files (R = 4000). The partitioning function uses the initial bytes of the key to segregate it into one of R pieces.

Para3

- Our partitioning function for this benchmark has builtin knowledge of the distribution of keys. In a general sorting program, we would add a pre-pass MapReduce operation that would collect a sample of the keys and use the distribution of the sampled keys to compute split points for the final sorting pass.(我们对于这个基准的分区函数,是建立在keys分发的知识上的。在一个普通的排序程序中,我们会调价一个预处理的MR操作来手机一些keys的样本,并且用这个样本中的keys进行分发来计算最终排序算法的“分割点”)

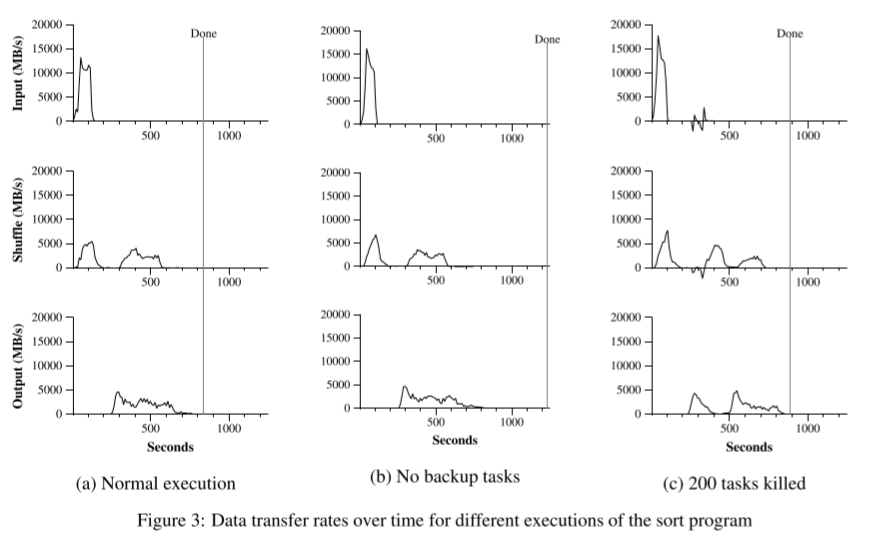

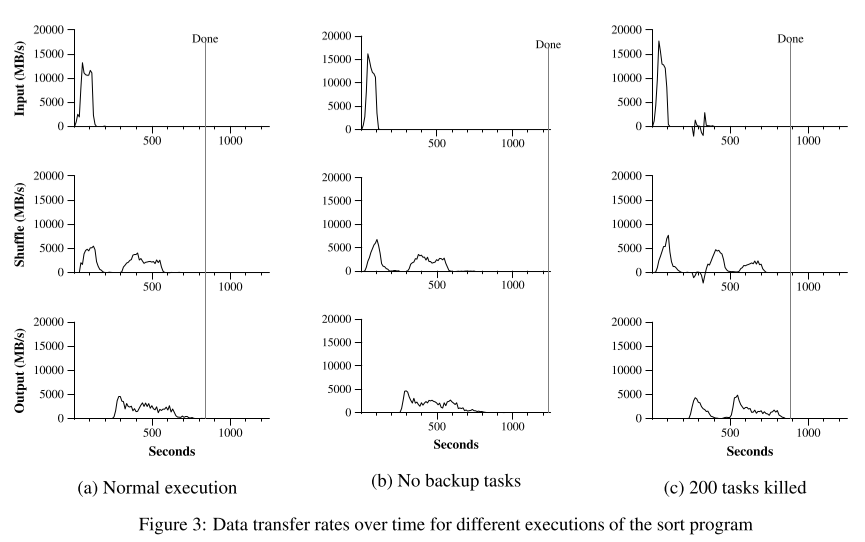

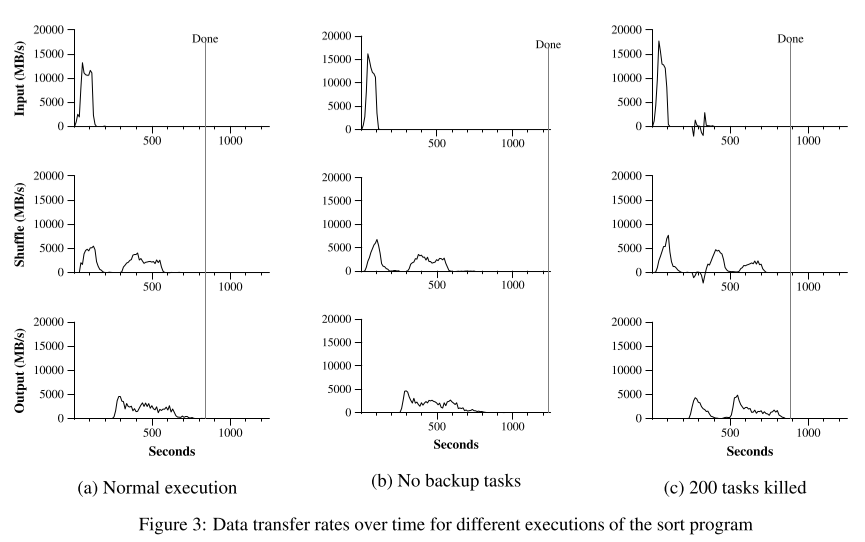

Analysis of the picture:

- Figure 3 (a) shows the progress of a normal execution of the sort program. The top-left graph shows the rate at which input is read. The rate peaks at about 13 GB/s and dies off fairly quickly since all map tasks finish before 200 seconds have elapsed. Note that the input rate is less than for grep. This is because the sort map tasks spend about half their time and I/O bandwidth writing intermediate output to their local disks. The corresponding intermediate output for grep had negligible size.

- The middle-left graph shows the rate at which data is sent over the network from the map tasks to the reduce tasks. This shuffling starts as soon as the first map task completes. The first hump in the graph is for the first batch of approximately 1700 reduce tasks (the entire MapReduce was assigned about 1700 machines, and each machine executes at most one reduce task at a time). Roughly 300 seconds into the computation, some of these first batch of reduce tasks finish and we start shuffling data for the remaining reduce tasks. All of the shuffling is done about 600 seconds into the computation.(第一个Map完成任务之后,shuffle就开始了。第一个高峰是第一批大概1700个reduce任务。大概300s花在计算上,第一批的一部分reduce任务结束并且我们开始对于剩余的reduce任务进行shuffle。)

- The bottom-left graph shows the rate at which sorted data is written to the final output files by the reduce tasks. There is a delay between the end of the first shuffling period and the start of the writing period because the machines are busy sorting the intermediate data. The writes continue at a rate of about 2-4 GB/s for a while. All of the writes finish about 850 seconds into the computation. Including startup overhead, the entire computation takes 891 seconds. This is similar to the current best reported result of 1057 seconds for the TeraSort benchmark [18].(有一段空的是因为机器忙着shuffle嗷!!!)

- Last Para:

- A few things to note: the input rate is higher than the shuffle rate and the output rate because of our locality optimization – most data is read from a local disk and bypasses our relatively bandwidth constrained network. The shuffle rate is higher than the output rate because the output phase writes two copies of the sorted data (we make two replicas of the output for reliability and availability reasons).

- We write two replicas because that is the mechanism for reliability and availability provided by our underlying file system. Network bandwidth requirements for writing data would be reduced if the underlying file system used erasure coding [14] rather than replication.

5.4 Effect of Backup Tasks

- In Figure 3 (b), we show an execution of the sort program with backup tasks disabled. The execution flow is similar to that shown in Figure 3 (a), except that there is a very long tail where hardly any write activity occurs. After 960 seconds, all except 5 of the reduce tasks are completed. However these last few stragglers don’t finish until 300 seconds later. The entire computation takes 1283 seconds, an increase of 44% in elapsed time.(曲线和A类似,但是明显可以看到,b中的结束时间更加晚,因为存在stragglers)

5.5 Machine Failures

- In Figure 3 (c), we show an execution of the sort program where we intentionally killed 200 out of 1746 worker processes several minutes into the computation. The underlying cluster scheduler immediately restarted new worker processes on these machines (since only the processes were killed, the machines were still functioning properly).

- The worker deaths show up as a negative input rate since some previously completed map work disappears (since the corresponding map workers were killed) and needs to be redone. The re-execution of this map work happens relatively quickly. The entire computation finishes in 933 seconds including startup overhead (just an increase of 5% over the normal execution time).

6. Experience

Para1:

- We wrote the first version of the MapReduce library in February of 2003, and made significant enhancements to it in August of 2003, including the locality optimization, dynamic load balancing of task execution across worker machines, etc. Since that time, we have been pleasantly surprised at how broadly applicable the MapReduce library has been for the kinds of problems we work on. It has been used across a wide range of domains within Google, including:

- large-scale machine learning problems,

- clustering problems for the Google News and Froogle products,

- extraction of data used to produce reports of popular queries (e.g. Google Zeitgeist),

- extraction of properties of web pages for new experiments and products (e.g. extraction of geographical locations from a large corpus of web pages for localized search), and

- large-scale graph computations.

- We wrote the first version of the MapReduce library in February of 2003, and made significant enhancements to it in August of 2003, including the locality optimization, dynamic load balancing of task execution across worker machines, etc. Since that time, we have been pleasantly surprised at how broadly applicable the MapReduce library has been for the kinds of problems we work on. It has been used across a wide range of domains within Google, including:

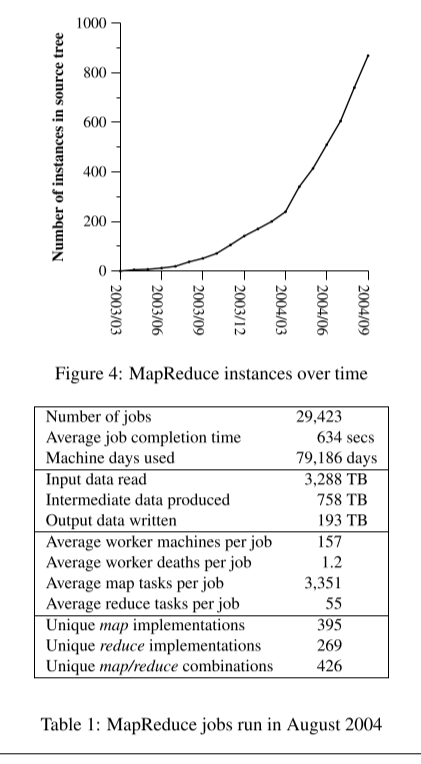

Para2:

- Figure 4 shows the significant growth in the number of separate MapReduce programs checked into our primary source code management system over time, from 0 in early 2003 to almost 900 separate instances as of late September 2004. MapReduce has been so successful because it makes it possible to write a simple program and run it efficiently on a thousand machines in the course of half an hour, greatly speeding up the development and prototyping cycle. Furthermore, it allows programmers who have no experience with distributed and/or parallel systems to exploit large amounts of resources easily.

- At the end of each job, the MapReduce library logs statistics about the computational resources used by the job. In Table 1, we show some statistics for a subset of MapReduce jobs run at Google in August 2004.

6.1 Large-Scale Indexing

- One of our most significant uses of MR to date has been a complete rewrite of the production indexing system that produces the data structures used for the Google web search service. The indexing system takes as input a large set of documents that have been retrieved by our crawling system, stored as a set of GFS files. The raw contents for these documents are more than 20 terabytes of data.

- The indexing process runs as a sequence of five to ten MapReduce operations. Using MapReduce (instead of the ad-hoc distributed passes in the prior version of the indexing system) has provided several benefits:

- The indexing code is simpler, smaller, and easier to understand, because the code that deals with fault tolerance, distribution and parallelization is hidden within the MapReduce library. For example, the size of one phase of the computation dropped from approximately 3800 lines of C++ code to approximately 700 lines when expressed using MapReduce.

- The performance of the MapReduce library is good enough that we can keep conceptually unrelated computations separate, instead of mixing them together to avoid extra passes over the data. This makes it easy to change the indexing process. For example, one change that took a few months to make in our old indexing system took only a few days to implement in the new system.

- The indexing process has become much easier to operate, because most of the problems caused by machine failures, slow machines, and networking hiccups are dealt with automatically by the MapReduce library without operator intervention. Furthermore, it is easy to improve the performance of the indexing process by adding new machines to the indexing cluster.

7. Related Work

- Many systems have provided restricted programming models and used the restrictions to parallelize the computation automatically. For example, an associative function can be computed over all prefixes of an N element array in logN time on N processors using parallel prefix computations [6, 9, 13]. MapReduce can be considered a simplification and distillation of some of these models based on our experience with large real-world computations. More significantly, we provide a fault-tolerant implementation that scales to thousands of processors. In contrast, most of the parallel processing systems have only been implemented on smaller scales and leave the details of handling machine failures to the programmer.(一句话:MR牛逼!)